March 21, 2019

Make deep learning faster and simpler

Techniques to make deep learning faster, require less storage and be easier for programmers to code

WEST LAFAYETTE, Ind. – Artificial intelligence systems based on deep learning are changing the electronic devices that surround us.

The results of this deep learning is something seen each time a computer understands our speech, we search for a picture of a friend or we see an appropriately placed ad. But the deep learning itself requires enormous clusters of computers and weeklong runs.

Researchers at Purdue University and Maynooth University have developed techniques to make deep learning faster and require less storage. (Stock photo)

Download image

Researchers at Purdue University and Maynooth University have developed techniques to make deep learning faster and require less storage. (Stock photo)

Download image

“Methods developed by our international team will reduce this burden,” said Jeffrey Mark Siskind, professor of electrical and computer engineering in Purdue’s College of Engineering. “Our methods allow individuals with more modest computers to do the kinds of deep learning that used to require multimillion dollar clusters, and allow programmers to write programs in hours which used to require months.”

Deep learning uses a particular kind of calculus at its heart: a clever technique, called automatic differentiation (AD) in the reverse accumulation mode, for efficiently calculating how adjustments to a large number of controls will affect a result.

“Sophisticated software systems and gigantic computer clusters have been built to perform this particular calculation,” said Barak Pearlmutter, professor of computer science at Maynooth University in Ireland, and the other principal of this collaboration. “These systems underlie much of the AI in society: speech recognition, internet search, image understanding, face recognition, machine translation and the placement of advertisements.”

One major limitation on these deep learning systems is that they support this particular AD calculation very rigidly.

“These systems only work on very restricted kinds of computer programs: ones that consume numbers on their input, perform the same numeric operations on them regardless of their values, and output the resulting numbers,” Siskind said.

The researchers said another limitation is that the AD operation requires a great deal of computer memory. These restrictions limit the size and sophistication of the deep learning systems that can be built. For example, they make it difficult to build a deep learning system that performs a variable amount of computation depending on the difficulty of the particular input, one that tries to anticipate the actions of an intelligent adaptive user, or one that produces as its output a computer program.

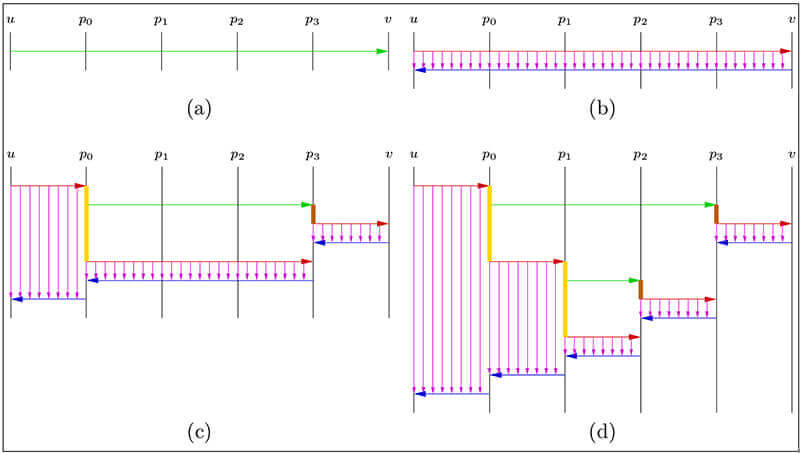

Researchers are using automatic differentiation and other techniques to make deep learning faster and simpler. (Image provided)

Download image

Researchers are using automatic differentiation and other techniques to make deep learning faster and simpler. (Image provided)

Download image

Siskind said the collaboration is aimed at lifting these restrictions.

A series of innovations allows not just reverse-mode AD, but other modes of AD, to be used efficiently; for these operations to be cascaded, and applied not just to rigid computations but also to arbitrary computer programs; for increasing the efficiency of these processes; and for greatly reducing the amount of required computer memory.

“Usually these sorts of gains come at the price of increasing the burden on computer programmers,” Siskind said. “Here, the techniques developed allow this increased flexibility and efficiency while greatly reducing the work that computer programmers building AI systems will need to do.”

For example, a technique called "checkpoint reverse AD" for reducing the memory requirements was previously known, but could only be applied in limited settings, was very cumbersome, and required a great deal of extra work from the computer programmers building the deep learning systems.

One method developed by the team allows the reduction of memory requirements to apply to any computer program, and requires no extra work from the computer programmers building the AI systems.

“The massive reduction in RAM required for training AI systems should allow more sophisticated systems to be built, and should allow machine learning to be performed on smaller machines – smart phones instead of enormous computer clusters,” Siskind said.

As a whole, this technology has the potential to make it much easier to build sophisticated deep-learning-based AI systems.

“These theoretical advances are being built into a highly efficient full-featured implementation which runs on both CPUs and GPUs and supports a wide range of standard components used to build deep-learning models,” Siskind said.

The work is supported by the National Science Foundation, the Defense Advanced Research Projects Agency, the Intelligence Advanced Research Projects Activity and the Science Foundation Ireland.

Their work aligns with Purdue's Giant Leaps celebration, celebrating the global advancements in artificial intelligence as part of Purdue’s 150th anniversary. AI, including machine and deep learning, is one of the four themes of the yearlong celebration’s Ideas Festival, designed to showcase Purdue as an intellectual center solving real-world issues.

Siskind and Pearlmutter have worked with the Purdue Research Foundation Office of Technology Commercialization, in coordination with its counterpart at Maynooth University, to patent their innovations. They are looking for additional research partners or licensees.

About Purdue Research Foundation Office of Technology Commercialization

The Office of Technology Commercialization operates one of the most comprehensive technology transfer programs among leading research universities in the U.S. Services provided by this office support the economic development initiatives of Purdue University and benefit the university's academic activities. The office is managed by the Purdue Research Foundation, which received the 2016 Innovation and Economic Prosperity Universities Award for Innovation from the Association of Public and Land-grant Universities. For more information about funding and investment opportunities in startups based on a Purdue innovation, contact the Purdue Foundry at foundry@prf.org. For more information on licensing a Purdue innovation, contact the Office of Technology Commercialization at otcip@prf.org. The Purdue Research Foundation is a private, nonprofit foundation created to advance the mission of Purdue University.

Writer: Chris Adam, 765-588-3341, cladam@prf.org

Sources: Jeffrey Mark Siskind, qobi@purdue.edu

Barak Pearlmutter, barak@pearlmutter.net