Brain-inspired AI hardware helps autonomous devices operate efficiently and independently

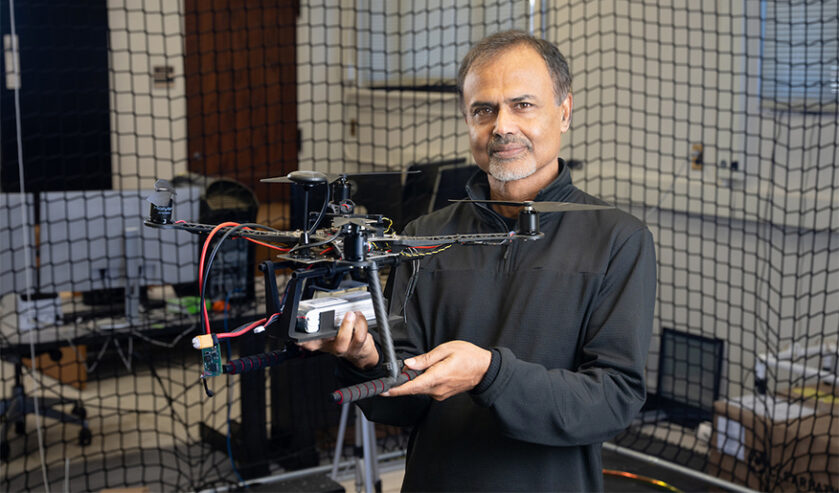

Kaushik Roy, the Edward G. Tiedemann, Jr. Distinguished Professor of Electrical and Computer Engineering and director of the Institute of Chips and AI, holds a drone that uses his brain-inspired hardware to navigate its surroundings. (Purdue University photo/John Underwood)

WEST LAFAYETTE, Ind. — The human brain constantly makes decisions without us noticing. It requires minimal power to move our bodies in the desired direction or avoid an object.

A Purdue University engineer uses the brain’s efficiency as inspiration to help autonomous vehicles, such as drones and robots, make crucial, time-sensitive decisions while operating in the field.

Kaushik Roy, the Edward G. Tiedemann, Jr. Distinguished Professor of Electrical and Computer Engineering in Purdue’s Elmore Family School of Electrical and Computer Engineering and director of the Institute of Chips and AI, is developing brain-inspired hardware that enables autonomous devices to efficiently navigate and adapt to their environment.

AI-powered machines have advanced significantly over the past several decades thanks to machine learning, which enables these devices to recognize patterns and make predictions or decisions. But the algorithms that facilitate this learning require immense amounts of energy to operate due to their intensive calculations and the design of the hardware that run them.

“Today’s AI devices are designed with separate processing and memory units,” Roy said. “It takes a lot of energy to move the data from the memory to the processing unit and then perform all these complex operations. This is particularly problematic for machines like drones that need to process information quickly and efficiently to avoid obstacles while completing their assigned tasks.”

To solve this energy problem, Roy and his team in the Nanoelectronics Research Laboratory are developing a system of sensors, algorithms and hardware that allow autonomous, vision-based vehicles to move from point A to B while avoiding obstacles, optimizing energy use and operating independently.

“From the little we understand of the brain, computation and memory are not separated, essentially making it the most efficient processor imaginable,” Roy said. “That’s why we’re taking more direct cues from the brain and co-designing hardware and algorithms that will optimize a variety of AI devices.”

Algorithms power AI cognition

At the heart of this system are algorithms called spiking neural networks (SNNs). All neural networks are comprised of layers of artificial neurons that activate when presented with information, much like how a biological neuron works within the brain. However, unlike the brain, all the neurons in a traditional neural network activate with every input of information, thereby expending large amounts of energy with every calculation and every decision or action taken by the network.

On the other hand, the individual neurons in SNNs only fire, or “spike,” when they receive important information. What is deemed “important” to a particular neuron is based on an assigned membrane potential — a threshold that determines when a neuron activates. An input or piece of data must reach that threshold for a neuron to spike and produce a reaction. Therefore, each neuron only processes and stores “memories” relevant to their function.

“The membrane potential of each neuron acts as memory, allowing the network to remember the past, much like biological neurons do,” Roy said. “This behavior turns out to be very useful for sequential and time-based tasks. These are the types of tasks that drones and other autonomous vehicles are performing as they collect information from their environment and use it to make decisions about what to do next.”

While a neural network that fires selectively is a strength in terms of processing power, it introduces a weakness in training. Traditional neural networks learn from their mistakes by relying on backpropagation — a constant flow of information through the network’s layers of neurons that helps figure out where and how mistakes occurred. The selective firing of SNNs produces inconsistent activity and less information. And while the timing of a spike is critical to improving an SNN, the backpropagation in a traditional system is designed to track only where errors occur, not when.

To address these problems, Kaushik and his team have developed hybrid neural networks that combine strengths of both traditional neural networks and SNNs. This combination captures timing information effectively while remaining trainable and compact enough for autonomous devices.

Event-based cameras enhance navigation

Two such algorithms, called Spike-FlowNet and Adaptive Safety Margin Algorithm, help special event-based cameras attached to the vehicles more effectively scan and process their environment.

Much like the individual neurons in an SNN, the individual pixels in an event-based camera operate independently, and the camera only records when there’s a movement or change happening in the pixels. This differs from traditional cameras, which record an entire scene — all the pixels at all times.

The cameras mimic how humans interpret visual data through two key aspects of the visual system: rapid eye movements and how the eye focuses. This approach helps event-based cameras process a scene more quickly by prioritizing regions of interest over frame-by-frame computation.

“Human visual systems focus sharply on a specific region and use rapid eye movements to scan a scene efficiently,” Roy said. “Our work incorporates these mechanisms into artificial vision systems so they focus their computational resources on the most relevant parts of a scene, like a moving object, rather than processing everything equally.”

Roy and his team have tested this technology on a drone, with the vehicle successfully navigating around moving rings in real time.

“Using vision sensors only, the drone can avoid stationary and moving objects and reach its target without collision,” Roy said. “While doing this, it has to determine how objects move in the visual field, estimate depth and then plan a path. These are time-dependent operations, where understanding how things change over time is critical.”

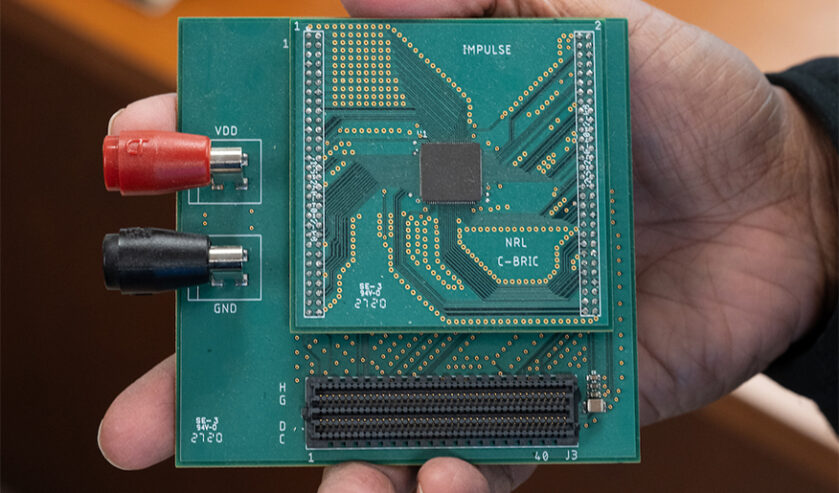

Computation and memory converge in specialized hardware

Hardware, the final component of Roy’s system, is currently under development. He aims to harness in-memory computing to eliminate what is termed the von-Neumann bottleneck — the pathway that data must travel between a computer’s central processing unit and memory, often resulting in computational lags.

The hardware in development effectively eliminates that pathway by mapping computational operations and processes directly onto a memory chip.

One device, an electronic synapse that mimics how the brain learns, works by sending an electrical current through a layer of metal that then produces an effect called spin-orbit torque.

Spin-orbit torque works by moving regions of a magnetic layer in different directions depending on the timing and strength of the current. The device learns when the electrical current physically reshapes the magnetic structure, influencing how strongly the current passes in the future.

Devices like the electronic synapse reduce power consumption, increase energy efficiency and, most importantly, operate without internet connections — crucial for autonomous devices out in the field.

While the demonstrations use drones, the same brain-inspired architectures could apply to ground robots, autonomous vehicles, wearables and other embedded AI systems that need real-time perception and decision-making under energy constraints.

Roy’s work is supported by the Center for the Co-design of Cognitive Systems, a Joint University Microelectronics Program 2.0 center, part of the Semiconductor Research Corp. program sponsored by the Defense Advanced Research Projects Agency and the National Science Foundation. The Elmore Family School of Electrical and Computer Engineering is one of Purdue’s computing departments, which are part of Purdue Computes, a strategic initiative to advance Purdue’s research and educational excellence in computing.

About Purdue University

Purdue University is a public research university leading with excellence at scale. Ranked among top 10 public universities in the United States, Purdue discovers, disseminates and deploys knowledge with a quality and at a scale second to none. More than 106,000 students study at Purdue across multiple campuses, locations and modalities, including more than 57,000 at our main campus locations in West Lafayette and Indianapolis. Committed to affordability and accessibility, Purdue’s main campus has frozen tuition 14 years in a row. See how Purdue never stops in the persistent pursuit of the next giant leap — including its integrated, comprehensive Indianapolis urban expansion; the Mitch Daniels School of Business; Purdue Computes; and the One Health initiative — at https://www.purdue.edu/president/strategic-initiatives.

Papers

Neuromorphic computing for robotic vision: Algorithms to hardware advances

Communications Engineering

DOI: https://doi.org/10.1038/s44172-025-00492-5

Breaking the memory wall: Next-generation artificial intelligence hardware

Frontiers in Science

DOI: https://doi.org/10.3389/fsci.2025.1611658

Spike-FlowNet: Event-based optical flow estimation with energy-efficient hybrid neural networks

European Conference on Computer Vision

DOI: https://doi.org/10.1007/978-3-030-58526-6_22

ASMA: An Adaptive Safety Margin Algorithm for vision-language drone navigation via scene-aware control barrier functions

IEEE Robotics and Automation Letters

DOI: https://doi.org/10.1109/LRA.2025.3592138

Exploring foveation and saccade for improved weakly-supervised localization

Proceedings of the 2nd Gaze Meets Machine Learning Workshop

URL: https://proceedings.mlr.press/v226/ibrayev24a

Media contact: Trevor Peters, peter237@purdue.edu