Autonomous vehicles could understand their passengers better with ChatGPT, research shows

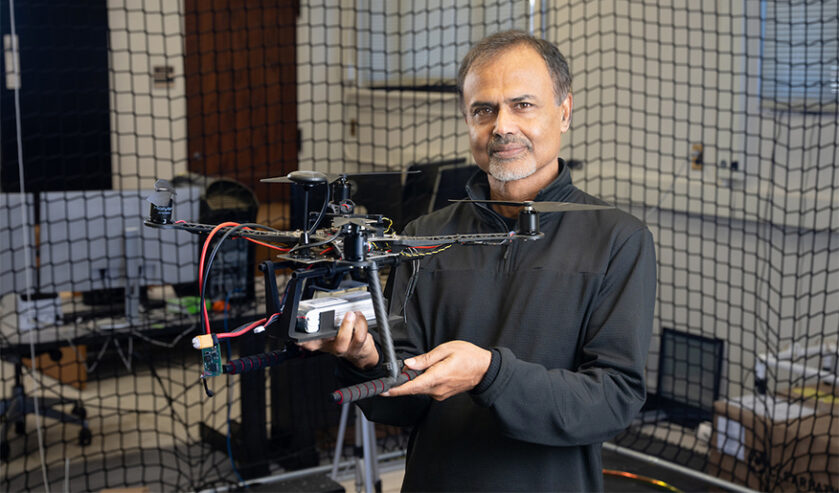

Purdue University assistant professor Ziran Wang stands next to a test autonomous vehicle that he and his students equipped to interpret commands from passengers using ChatGPT or other large language models. (Purdue University photo/John Underwood)

WEST LAFAYETTE, Ind. — Imagine simply telling your vehicle, “I’m in a hurry,” and it automatically takes you on the most efficient route to where you need to be.

Purdue University engineers have found that an autonomous vehicle (AV) can do this with the help of ChatGPT or other chatbots made possible by artificial intelligence algorithms called large language models.

The study, to be presented Sept. 25 at the 27th IEEE International Conference on Intelligent Transportation Systems, may be among the first experiments testing how well a real AV can use large language models to interpret commands from a passenger and drive accordingly.

Ziran Wang, an assistant professor in Purdue’s Lyles School of Civil and Construction Engineering who led the study, believes that for vehicles to be fully autonomous one day, they’ll need to understand everything that their passengers command, even when the command is implied. A taxi driver, for example, would know what you need when you say that you’re in a hurry without you having to specify the route the driver should take to avoid traffic.

Although today’s AVs come with features that allow you to communicate with them, they need you to be clearer than would be necessary if you were talking to a human. In contrast, large language models can interpret and give responses in a more humanlike way because they are trained to draw relationships from huge amounts of text data and keep learning over time.

“The conventional systems in our vehicles have a user interface design where you have to press buttons to convey what you want, or an audio recognition system that requires you to be very explicit when you speak so that your vehicle can understand you,” Wang said. “But the power of large language models is that they can more naturally understand all kinds of things you say. I don’t think any other existing system can do that.”

Conducting a new kind of study

In this study, large language models didn’t drive an AV. Instead, they were assisting the AV’s driving using its existing features. Wang and his students found through integrating these models that an AV could not only understand its passenger better, but also personalize its driving to a passenger’s satisfaction.

Before starting their experiments, the researchers trained ChatGPT with prompts that ranged from more direct commands (e.g., “Please drive faster”) to more indirect commands (e.g., “I feel a bit motion sick right now”). As ChatGPT learned how to respond to these commands, the researchers gave its large language models parameters to follow, requiring it to take into consideration traffic rules, road conditions, the weather and other information detected by the vehicle’s sensors, such as cameras and light detection and ranging.

The researchers then made these large language models accessible over the cloud to an experimental vehicle with level four autonomy as defined by SAE International. Level four is one level away from what the industry considers to be a fully autonomous vehicle.

When the vehicle’s speech recognition system detected a command from a passenger during the experiments, the large language models in the cloud reasoned the command with the parameters the researchers defined. Those models then generated instructions for the vehicle’s drive-by-wire system — which is connected to the throttle, brakes, gears and steering — regarding how to drive according to that command.

For some of the experiments, Wang’s team also tested a memory module they had installed into the system that allowed the large language models to store data about the passenger’s historical preferences and learn how to factor them into a response to a command.

The researchers conducted most of the experiments at a proving ground in Columbus, Indiana, which used to be an airport runway. This environment allowed them to safely test the vehicle’s responses to a passenger’s commands while driving at highway speeds on the runway and handling two-way intersections. They also tested how well the vehicle parked according to a passenger’s commands in the lot of Purdue’s Ross-Ade Stadium.

The study participants used both commands that the large language models had learned and ones that were new while riding in the vehicle. Based on their survey responses after their rides, the participants expressed a lower rate of discomfort with the decisions the AV made compared to data on how people tend to feel when riding in a level four AV with no assistance from large language models.

The team also compared the AV’s performance to baseline values created from data on what people would consider on average to be a safe and comfortable ride, such as how much time the vehicle allows for a reaction to avoid a rear-end collision and how quickly the vehicle accelerates and decelerates. The researchers found that the AV in this study outperformed all baseline values while using the large language models to drive, even when responding to commands the models hadn’t already learned.

Future directions

The large language models in this study averaged 1.6 seconds to process a passenger’s command, which is considered acceptable in non-time-critical scenarios but should be improved upon for situations when an AV needs to respond faster, Wang said. This is a problem that affects large language models in general and is being tackled by the industry as well as by university researchers.

Although not the focus of this study, it’s known that large language models like ChatGPT are prone to “hallucinate,” which means that they can misinterpret something they learned and respond in the wrong way. Wang’s study was conducted in a setup with a fail-safe mechanism that allowed participants to safely ride when the large language models misunderstood commands. The models improved in their understanding throughout a participant’s ride, but hallucination remains an issue that must be addressed before vehicle manufacturers consider implementing large language models into AVs.

Vehicle manufacturers also would need to do much more testing with large language models on top of the studies that university researchers have conducted. Regulatory approval would additionally be required for integrating these models with the AV’s controls so that they can actually drive the vehicle, Wang said.

In the meantime, Wang and his students are continuing to conduct experiments that may help the industry explore the addition of large language models to AVs.

Since their study testing ChatGPT, the researchers have evaluated other public and private chatbots based on large language models, such as Google’s Gemini and Meta’s series of Llama AI assistants. So far, they’ve seen ChatGPT perform the best on indicators for a safe and time-efficient ride in an AV. Published results are forthcoming.

Another next step is seeing if it would be possible for large language models of each AV to talk to each other, such as to help AVs determine which should go first at a four-way stop. Wang’s lab also is starting a project to study the use of large vision models to help AVs drive in extreme winter weather common throughout the Midwest. These models are like large language models but trained on images instead of text. The project will be conducted with support from the Center for Connected and Automated Transportation (CCAT), which is funded by the U.S. Department of Transportation’s Office of Research, Development and Technology through its University Transportation Centers program. Purdue is one of the CCAT’s university partners.

The experiments Wang’s lab conducted on integrating large language models into an AV were supported by gift funding from Toyota Motor North America. Wang is the assistant director of the Institute for Control, Optimization and Networks at Purdue, which is affiliated with the university’s Institute for Physical Artificial Intelligence, a Purdue Computes initiative.

About Purdue University

Purdue University is a public research institution demonstrating excellence at scale. Ranked among top 10 public universities and with two colleges in the top four in the United States, Purdue discovers and disseminates knowledge with a quality and at a scale second to none. More than 105,000 students study at Purdue across modalities and locations, including nearly 50,000 in person on the West Lafayette campus. Committed to affordability and accessibility, Purdue’s main campus has frozen tuition 13 years in a row. See how Purdue never stops in the persistent pursuit of the next giant leap — including its first comprehensive urban campus in Indianapolis, the Mitch Daniels School of Business, Purdue Computes and the One Health initiative — at https://www.purdue.edu/president/strategic-initiatives.

Paper

Personalized autonomous driving with large language models: field experiments

27th IEEE International Conference on Intelligent Transportation Systems

DOI: 10.48550/arXiv.2312.09397

Media contact: Kayla Albert, 765-494-2432, wiles5@purdue.edu

Note to journalists:

High-resolution photos and b-roll showing a demonstration of these experiments are available on Google Drive. A video of Ziran Wang talking about this research is available to media who have an Associated Press subscription.